Antwort What is explainable AI examples? Weitere Antworten – What are examples of explainable AI

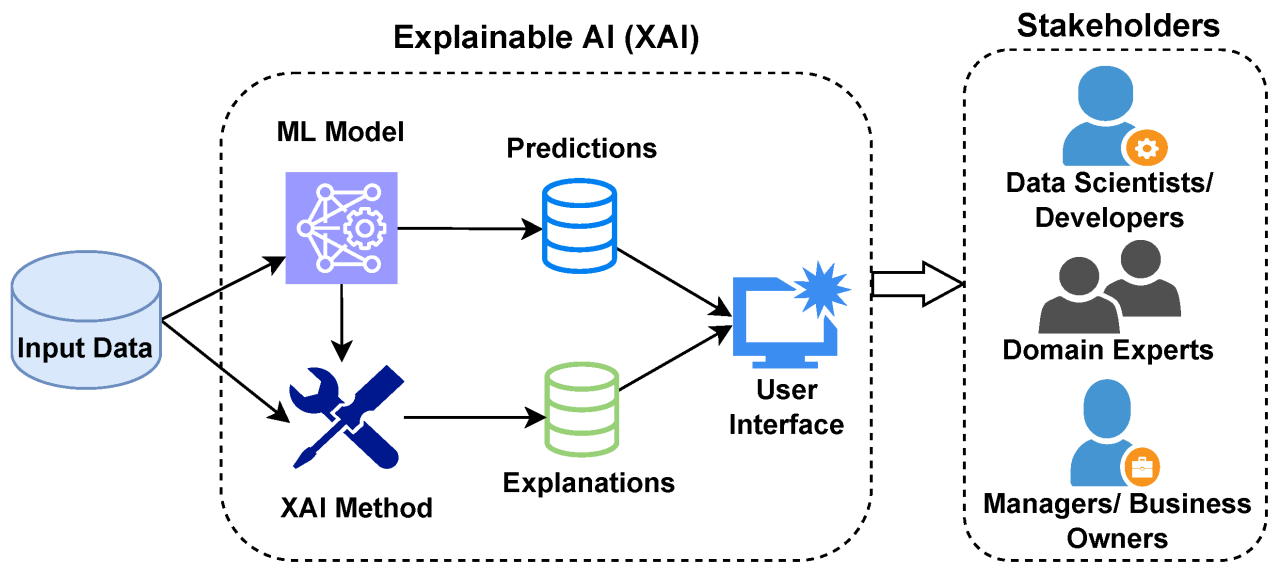

For example, hospitals can use explainable AI for cancer detection and treatment, where algorithms show the reasoning behind a given model's decision-making. This makes it easier not only for doctors to make treatment decisions, but also provide data-backed explanations to their patients.What is explainable AI Explainable artificial intelligence (XAI) is a set of processes and methods that allows human users to comprehend and trust the results and output created by machine learning algorithms. Explainable AI is used to describe an AI model, its expected impact and potential biases.Explainability (also referred to as “interpretability”) is the concept that a machine learning model and its output can be explained in a way that “makes sense” to a human being at an acceptable level.

What is explainable AI for kids : And industries one of the most well-known. Examples of AI is voice assistance like Siri and Alexa which use natural language processing.

Is ChatGPT explainable AI

ChatGPT is the antithesis of XAI (explainable AI), it is not a tool that should be used in situations where trust and explainability are critical requirements.

What are the 4 types of AI with example : 4 main types of artificial intelligence

- Reactive machines. Reactive machines are AI systems that have no memory and are task specific, meaning that an input always delivers the same output.

- Limited memory machines. The next type of AI in its evolution is limited memory.

- Theory of mind.

- Self-awareness.

When Elon Musk created his artificial-intelligence startup xAI last year, he said its researchers would work on existential problems like understanding the nature of the universe. Musk is also using xAI to pursue a more worldly goal: joining forces with his social-media company X.

Model explainability refers to the concept of being able to understand the machine learning model. For example – If a healthcare model is predicting whether a patient is suffering from a particular disease or not.

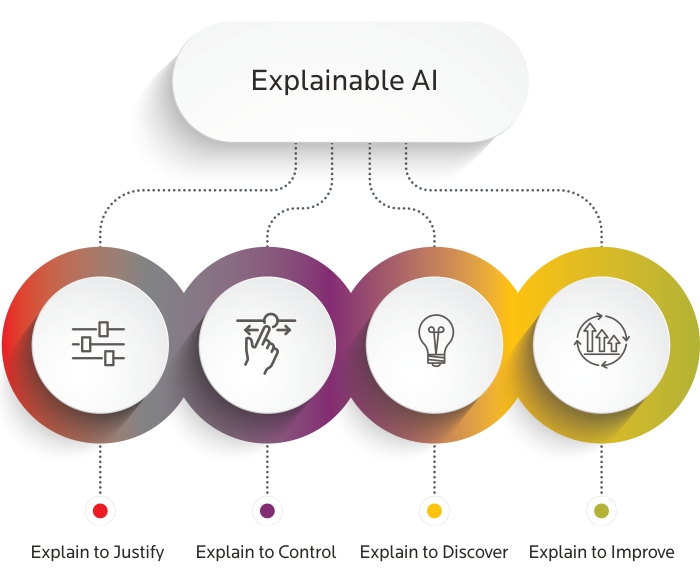

What are the four principles of explainable AI

We have termed these four principles as explanation, meaningful, explanation accuracy, and knowledge limits, respectively.Even as explainability gains importance, it is becoming significantly harder. Modeling techniques that today power many AI applications, such as deep learning and neural networks, are inherently more difficult for humans to understand.Or irreversible Global catastrophe combine these with Advanced Ai. And you get a potent mix of theoretical speculation.

Siri is Apple's voice-enabled virtual assistant powered by artificial intelligence, machine learning, and voice recognition. Using the commands "Siri" or "Hey Siri," you can activate Siri and ask it to perform various tasks, such as texting a friend, opening an app, pulling up a photo, or playing your favorite song.

What type of AI is ChatGPT : Open AI

Chat GPT stands for Chat Generative Pre-Trained Transformer and was developed by an AI research company, Open AI. It is an artificial intelligence (AI) chatbot technology that can process our natural human language and generate a response.

Does Elon Musk still own OpenAI : Elon Musk was one of the co-founders of OpenAI, but he is no longer affiliated with the organization. OpenAI is an independent research organization that is not owned by any individual or company. It is a non-profit organization that is funded by a variety of sources, including donations and grants.

Who owns Chat GPT

OpenAI LP

Chat GPT is owned by OpenAI LP, an artificial intelligence research lab consisting of the for-profit OpenAI LP and its parent company, the non-profit OpenAI Inc.

Advanced Manufacturing

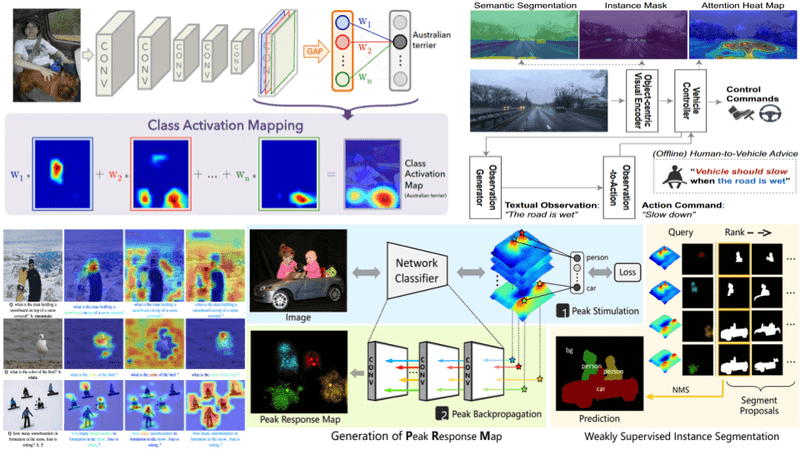

Explainable AI, or XAI, can help address 'algorithm aversion' by providing insights into decisions made, thereby building trust. Taking an XAI approach enables both humans and machines to perform at their best in sectors such as manufacturing.Explainability aims to answer stakeholder questions about the decision-making processes of AI systems. Developers and ML practitioners can use explanations to ensure that ML model and AI system project requirements are met during building, debugging, and testing.

What are the 3 C’s of AI : Any Intelligent system has three major components of intelligence, one is Comparison, two is Computation and three is Cognition. These three C's in the process of any intelligent action is a sequential process.